Cursor released Composer 2 – Is it dethroning Opus 4.6?

Cursor just released Composer 2 – it’s a new AI Model for coding at $0.50 per million input tokens and $1.50 per million output tokens, much cheaper than Claude Opus 4.6 ($5.00/$25.00) and GPT-5.4 ($2.50/$15.00).

Share this article

Summary:

Cursor’s new in-house coding model beats Claude Opus 4.6 on key benchmarks while costing a fraction of the price.

Early impressions are mixed — some users report a clear quality jump, others aren’t convinced.

(Update) My test results of the Composer 2 model in Cursor

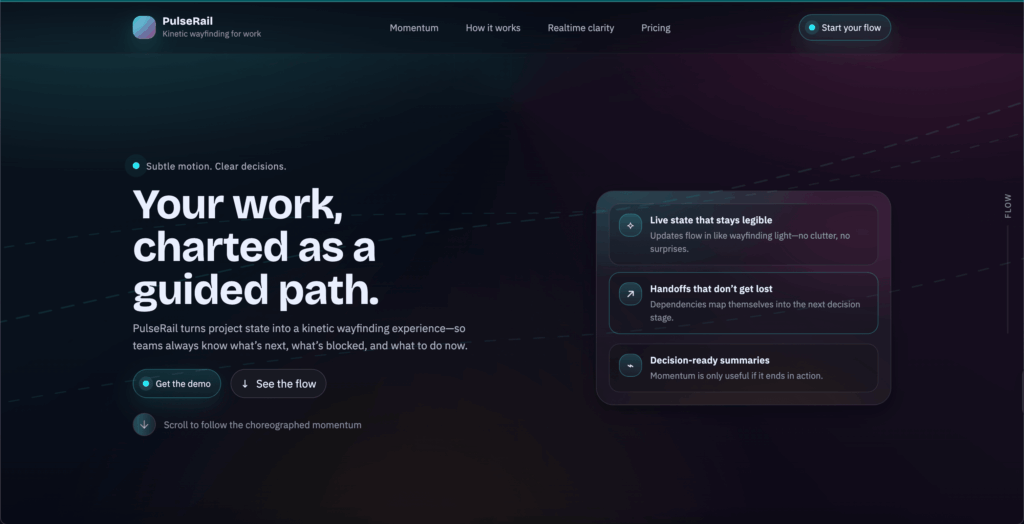

I used a simple landing page prompt to test the new model and see how it handles a straightforward real-world task.

Here are the result of my quick test:

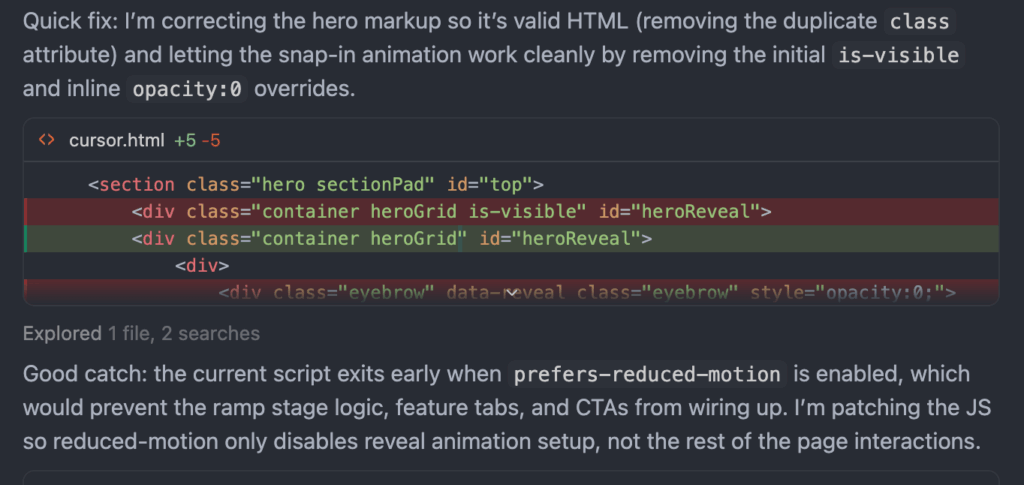

During the test, compared to Claude Code (Opus 4.6), Composer 2 went back on its own and fixed a few issues mid-generation — before I even noticed anything was off:

If you want to see how Opus 4.6 performs on the same type of task, I’ve already done a full head-to-head battle between Claude Code and Cursor — worth checking for a direct comparison.

Key benchmark results:

The model scores 61.3 on Cursor’s internal CursorBench, a big jump improvement from Composer 1.5 (44.2) and competitive with Claude Opus 4.6 (58.2) and GPT-5.4 Thinking (63.9).

Terminal-Bench 2.0 Scores

| Model | Terminal-Bench 2.0 score |

| GPT-5.4 | 75.1% |

| Composer 2 | 61.7 |

| Opus 4.6 | 58.0 |

| Opus 4.5 | 52.1 |

| Composer 1.5 | 47.9 |

People’s thoughts about the new release:

Looking at early user reactions, the feedback is mixed. Some users report a noticeable improvement in performance and output quality, while others say it feels worse than before.

Another user on X(Twitter) shared positive feedback on Composer 2

@wesbos built a “Gif zoetrope generator” to test out the new model, and in his opinion, it performed pretty well, considering the 10× cheaper than Opus 4.6 price tag

Cursor just launched Composer 2 – their own model.

— Wes Bos (@wesbos) March 19, 2026

It's 10× cheaper than Opus 4.6 and supposed to rival it.

I've been using it for a few days, I don't have any skewed graphs to show you but from a pure vibes POV I can tell you it's pretty good™

My litmus test right now is if… pic.twitter.com/uqwy1f5yYy

Key Model details:

- $0.50 per million input tokens and $1.50 per million output tokens

- 200,000 token context

- Two versions: Standard and Fast

- Self-summarization for long-running tasks.

Comparing Composer 2 to older models

Composer 2 is roughly 57% cheaper than Composer 1.5.

| Model | CursorBench | Terminal-Bench 2.0 | SWE-bench Multilingual |

|---|---|---|---|

| Composer 2 | 61.3 | 61.7 | 73.7 |

| Composer 1.5 | 44.2 | 47.9 | 65.9 |

| Composer 1 | 38.0 | 40.0 | 56.9 |

Why Was Composer 2 Released?

Owning a proprietary model cuts costs and lets Cursor train on real developer workflows that general-purpose labs don’t have access to.

Composer 2 is their most ambitious version yet — the first to run continuous pre-training, giving it a stronger foundation for reinforcement learning.

The standout innovation is self-summarization: instead of forgetting context mid-task, the model is trained to compact its own history and keep going, resulting in 50% fewer compaction errors and the ability to power through complex, multi-hundred-step refactors without losing the thread.

If you want to dive deeper into Cursor and track any key updates that the team releases, head over to our Cursor breakdown page.